Google has for some years been with DeepMind, its advanced artificial intelligence system, which has already given important samples of its capabilities by repeatedly winning Go teachers. Similarly, DeepMind continues to grow by nurturing new experiences such as the ability to manipulate physical objects, and even play StarCraft II.

Google knows that the day will come that DeepMind has to face other systems of artificial intelligence, and that moment will be decisive to know if they are able to collaborate or conflict in order to defend each one their interests. Therefore, Google has decided to anticipate that scenario, and make two artificial intelligence interact with each other within a series of social dilemmas.

To be selfish or seek the common good

Today Google is releasing the results of an interesting study by the department of DeepMind, where they have tested the reactions and collaborative capabilities of two artificial intelligence agents. The goal is to know the answer to situations where you can benefit from being selfish and block your partner, which can also be counterproductive if both are selfish. Yes, something based on the prisoner’s famous dilemma.

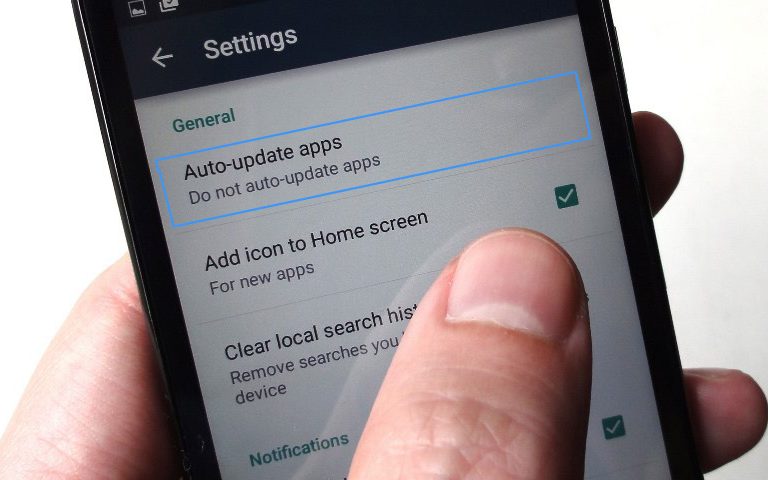

The researchers tested the response of both systems within two basic video games. The first game is known as ‘Gathering’, in it we will see the systems represented by a red dot and a blue dot, who must collect green points (apples) in order to obtain points. The interesting part is that each agent is able to shoot a laser at the opponent to paralyze it momentarily so that you can collect more apples, and therefore more points.

Here the result tells us that while there are apples in abundance, none of the agents worries about paralyzing their opponent, something that changes dramatically when stocks decline, which is when both took their most aggressive side to block their rival. An interesting point is that when an agent with more computing power was incorporated, it always sought to paralyze its opponent without having enough supply of apples.

You may also like to read another article on Web-Build: The myth of creative genius or why most revolutionary innovations are pure smoke

When viewing this behavior, the researchers developed the hypothesis that the action of firing the laser requires more processing power, since it is necessary to calculate the movement of the opponent and anticipate their movements to hit, so the attack strategy was only present in the most “intelligent” agent. While the “normal” agent decided to ignore the opponent and take advantage of his valuable time in collecting more apples.

The second game is known as ‘Wolfpack’, where the agents, represented in red, act like wolves hunting a dam, in blue, inside a scene full of obstacles. The agent that manages to capture the dam takes points, but if the other agent is near the time of capture, you will also receive points.

Here the behavior was interesting but not surprising, since both agents decided to collaborate in the hunting since there was a reward that benefited to both. Where curiously the most powerful agent sought to cooperate with the other and not act on his own, since in the end he served as an aid.

The key is in the context

The conclusions reached by the researchers tell us that the behavior of the agents depends to a great extent on the context. Here the key will be the rules that have been dictated for the interaction, since if these rules reward the aggressive behavior, like the case of Gathering, the agent will fight until winning. But if collaborative work is rewarded, as in Wolfpack, then both agents will seek the common good.

This tells us that in the future, when artificial intelligence has a greater presence, it will be decisive that the rules point to continuous cooperation, that is, clear rules, concise and applied in the right place. For the least symptom of struggle, competition or selfishness, we could face a situation that affects the entire ecosystem and, of course, our society.

+ There are no comments

Add yours