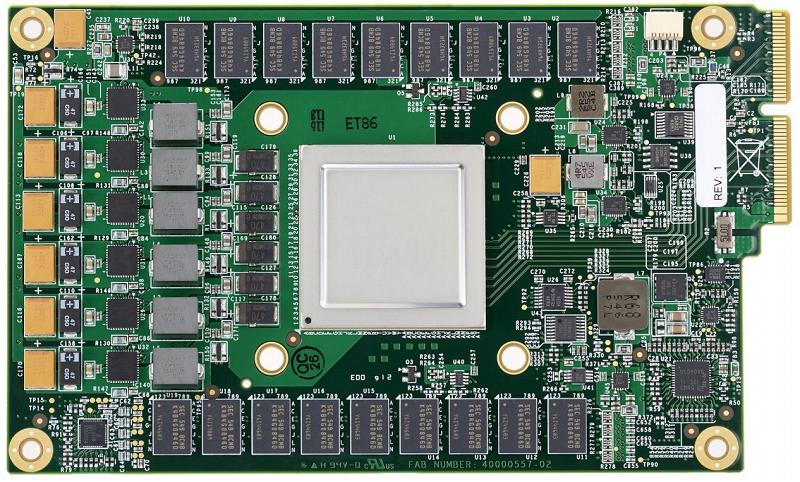

Although not given the hype it deserves, the whole industry is aware that Google is developing its own hardware: processors with which to accelerate algorithms that work artificial intelligence and ‘machine learning’.

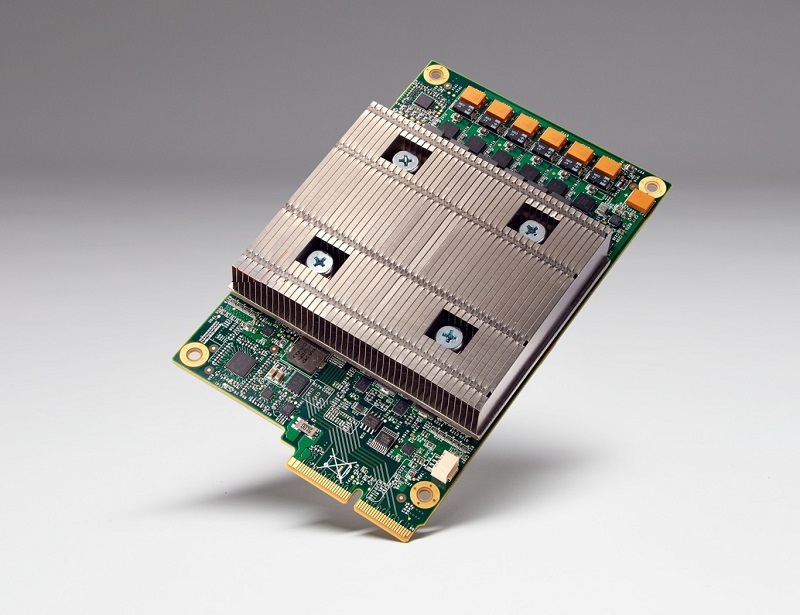

This new Google processor was introduced last year during the Google I / O developer event. It responds to the name of Tensor Processing Unit – TPU – and since then we have not had more details about it.

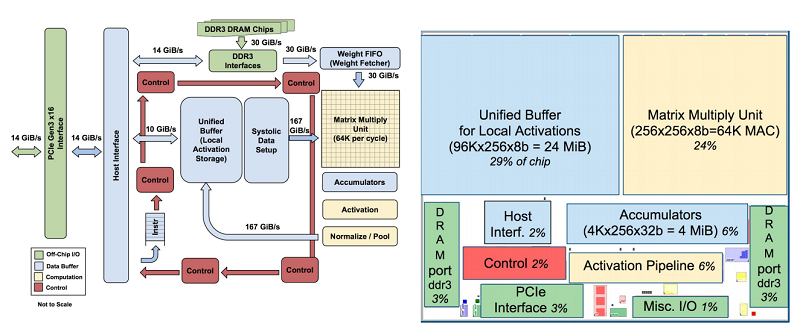

Google, like all companies that want to make a difference in optimization and performance, have to create solutions tailored to new needs and tasks. These chips will be responsible for bringing to life TensorFlow, Google’s open source library for automatic learning.

TPU, for own consumption

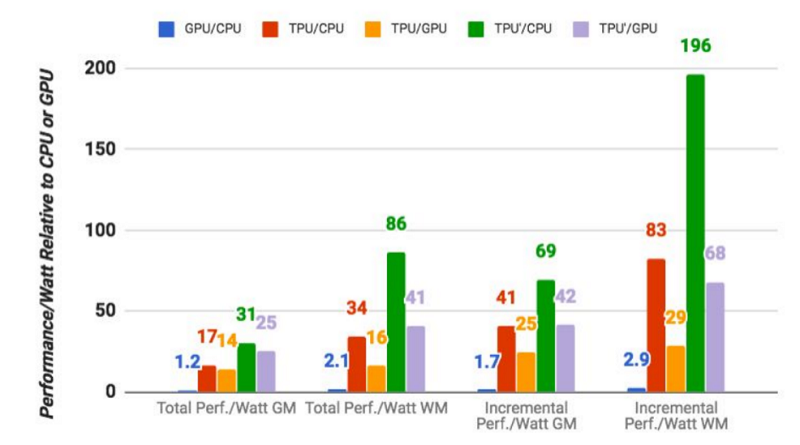

That the management of our photos, the mail or the Google assistant is more effective, depends on these TPUs. It ensures that its system of processors is capable of achieving a yield between 10 and 30 times higher than that now offer conventional components – Intel Haswell with Nvidia K80 -.

The improvement does not involve spending more energy, nor generating more heat, two important points for its installation in data center.

You may also like to read another article on Web-Build: Google has faced two artificial intelligence systems, will they fight or work together!

The idea of Google is to use this hardware for internal consumption, for its data cloud, it does not plan to sell the solution to third parties – nor license the technology -. They do expect others to follow in their footsteps with similar developments and help them raise the level.

Another point to note about TPUs is that they are easily scalable, allowing servers to be powered by multiple TPUs. The processors are ASIC type: Integrated Circuit for Specific Applications.

For those who want to know more, there is a document very focused on the designers of processors, I get lost on those levels. From there come diagrams and graphs like these:

+ There are no comments

Add yours